I haven’t taken the time to find a great solution here because I’m more interested in creating synthetic datasets. Why you shouldn’t try to create your own this way I’ve used it briefly and it seems very good.

There’s another, more recent, open-source project: COCO Annotator that is worth looking into instead. You might be able to tweak it for your own purposes if you really want to. The code they used can be found here, but I found it to be fairly unusable since it’s all hooked up to AWS and Mechanical Turk. The original researchers used Amazon Mechanical Turk to hire people for SUPER cheap to use a web app and tediously draw shapes around objects. This is how 99%+ of the original COCO dataset was created. You can also, of course, create annotations with vertices. V indicates visibility- v=0: not labeled (in which case x=y=0), v=1: labeled but not visible, and v=2: labeled and visibleĢ29, 256, 2 means there’s a keypoint at pixel x=229, y=256 and 2 indicates that it is a visible keypoint "annotations": [ X and y indicate pixel positions in the image. ,Īnnotations for keypoints are just like in Object Detection (Segmentation) above, except a number of keypoints is specified in sets of 3, (x, y, v). "left_knee","right_knee","left_ankle","right_ankle" "left_wrist","right_wrist","left_hip","right_hip", "left_shoulder","right_shoulder","left_elbow","right_elbow", For example, means "left_ankle" connects to "left_knee".

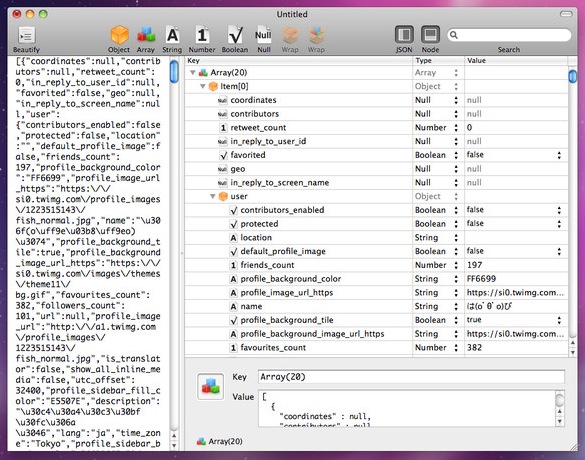

The “skeleton” indicates connections between points. In the case of a person, “keypoints” indicate different body parts. For example a shark (tail, fins, eyes, gills, etc) or a robotic arm (grabber, joints, base). CategoriesĪs of the 2017 version of the dataset, there is only one category (“person”) in the COCO dataset with keypoints, but this could theoretically be expanded to any category that might have different points of interest. They specify a list of points of interest, connections between those points, where those points are within the segmentation, and whether the points are visible. Keypoints add additional information about a segmented object. Is a category id of 1 (which is a person)Ĭorresponds with an image with id 250282 (which is a vintage class photo of about 50 school children) Is a crowd (meaning it’s a group of objects) Has an area of 220834 pixels (much larger) and a bounding box of Has a Run-Length-Encoding style segmentation Is not a crowd (meaning it’s a single object)Ĭorresponds with an image with id 289343 (which is a person on a strange bicycle and a tiny dog) Has an area of 702 pixels (pretty small) and a bounding box of Has a segmentation list of vertices (x, y pixel positions) The following JSON shows 2 different annotations. The category id corresponds to a single category specified in the categories section.Įach annotation also has an id (unique to all other annotations in the dataset). The image id corresponds to a specific image in the dataset. Is Crowd specifies whether the segmentation is for a single object or for a group/cluster of objects. a 10px by 20px box would have an area of 200). I’ll explain how this works later in the article.Īrea is measured in pixels (e.g. Typically, RLE is used for groups of objects (like a large stack of books). I say “usually” because regions of interest indicated by these annotations are specified by “segmentations”, which are usually a list of polygon vertices around the object, but can also be a run-length-encoded (RLE) bit mask. Usually this results in a new annotation item for each one. Often there will be multiple instances of an object in an image. For example, if there are 64 bicycles spread out across 100 images, there will be 64 bicycle annotations (along with a ton of annotations for other object categories). It contains a list of every individual object annotation from every image in the dataset. The “annotations” section is the trickiest to understand. The COCO dataset is formatted in JSON and is a collection of “info”, “licenses”, “images”, “annotations”, “categories” (in most cases), and “segment info” (in one case). If you’re not a video person or want more detail, keep reading.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed